Agentic CX

Multimodal AI is reshaping online shopping into a human-like, in-store experience. With context-aware conversations, virtual try-ons, and personalized bundles, AI becomes a 24×7 style advisor—boosting conversions, reducing returns, and increasing customer value. This revolution will redefine digital consumer experiences across retail and beyond.

April 9, 2026

How multimodal AI capabilities transform online shopping experience to mimic real in-store buying experiences?

AI is ushering in massive changes to online shopping. It is the dawn of era where each of us have our Personal Style advisor. While voice based input along with text has been in use for last few years, the inputs or searches do not persist with context. Each search is discrete. This leads to difficulty in finding the right item unless significant time is spent or choice specificity is not important.

Digital Brain along with context engineering helps elevate understanding of customer intent along with maintaining the context of the conversation across queries. This leads to more personalized recommendations.

Online retailers can start with text chat based conversational experience before upgrading to voice based conversations.

These agents will grow to be more sophisticate and get infinite memory and infinite context. It is similar to having a style advisor that remembers all your purchases and all your interactions. The ability of companies to leverage the info will be a big differentiator in building customer advocacy as recommendations can be significantly personalized. Earlier in August, openAI released advanced speech-to-speech model yet-gpt-realtime. This will change the way online shopping is done. The cost of tokens will come down.

What multiplicative capabilities does it deliver?

- Consultative discussions leading to better recommendations

- Customers can speak or chat naturally. The sentence construct or tone of the customer voice can provide insights on customer sentiments

- Consultative discussions makes the shopping experience more engaging with recommendations shared through voice and visuals. The visuals include videos.

- Interactive discussions lead to instant feedback from the consumers enabling rapid tailoring of recommendations

- Computer vision led virtual try ons

- While this functionality has been in existence for a long time, the sophistication has improved significantly with LLMs. This reduces returns by over 30%.

- Product Bundles – based on high level shopping objective eg. Going for camping or attending a friend’s marriage, recommendation engine can suggest entire set of products needed.

- Deep Product Knowledge – Shopping Assitants will be able to share details about the products which sometimes is difficult for humans to remember and highlight.

- Better discovery of unique products – Shopping assistants can suggest products based on customer preferences in a manner similar to what a human associate would do.

What impact will these new capabilities bring?

- Improved Conversion: Conversation based interactions lead to 35-65% higher conversion rates

- Increased AOV: Better product discovery leads to 25-40% higher average order value

- Reduced Returns: Accurate visual try-ons result in 30-50% fewer returns

- Customer Life Time Value: This can become 2X+

What design and technology approach needed to fulfil the promise?

Technical Challenges need to be focussed on

- Guardrails for the Styling agent so that she only responds to tasks in scope of shopping.

- Fine graining use case for prioritizing implementation - Take a task based approach for agentification and prove ROI before automating the entire workflow.

- AI Economics – Cost vs ROI – Cost of tokens also need to be managed. Choice of models for different tasks need to be different.

- Performance – While performance is important, design can help us render the right engagement even when there is some lag.

Design

- The experience design for the application needs to mimic fluid agentic design

- Delays and latency needs to be managed through appropriate design.

- The redesign of interface for interactive shopping experience needs to be aligned with product and category managers to ensure business alignment.

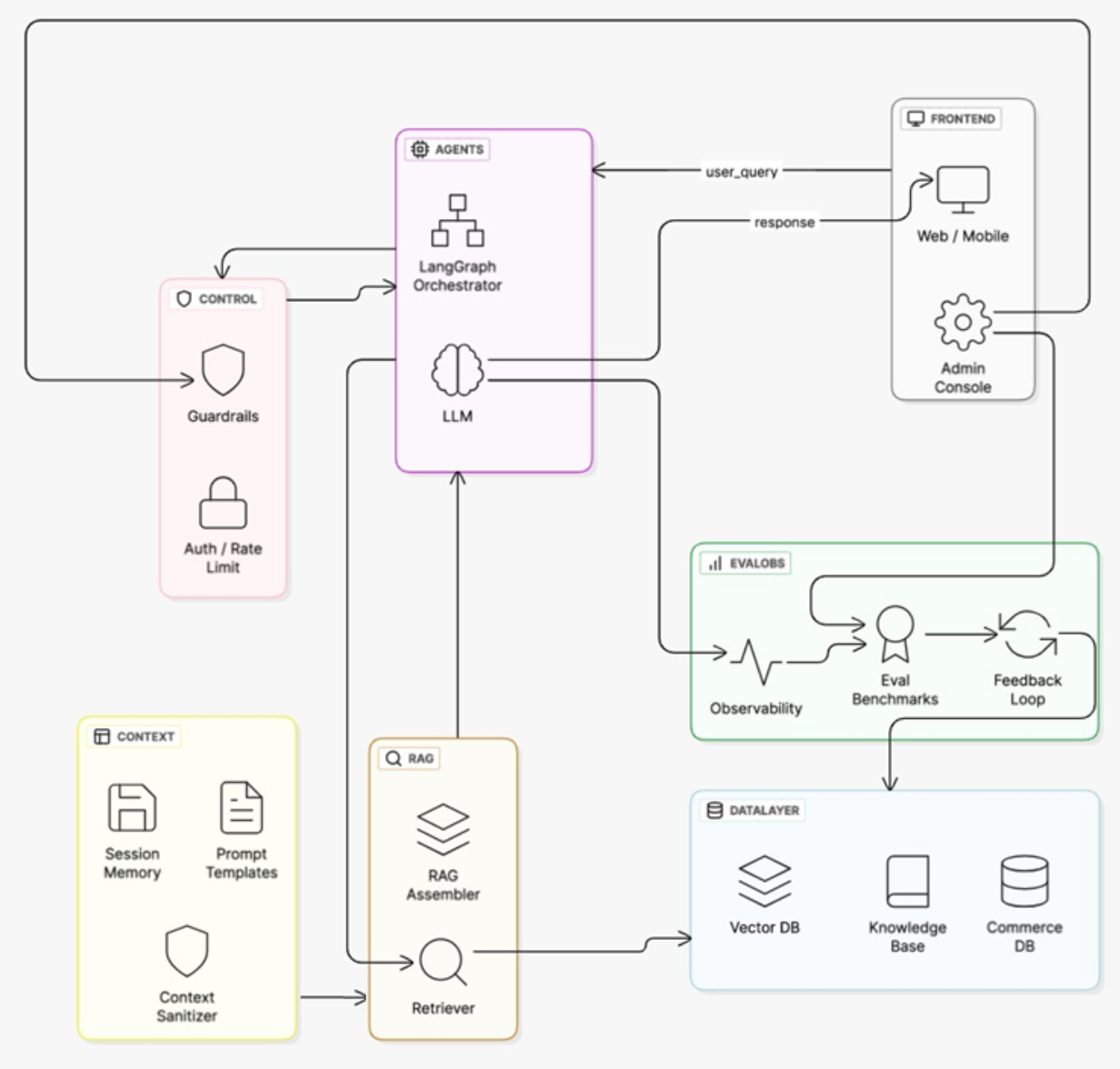

Here the building blocks of typical styling agent or a shopper assistant look like

Why is every block important in the blueprint and what role they play?

Frontend

Web / Mobile → Customers ask shopping questions and receive answers.

Admin Console → Store managers configure, monitor, and tune the advisor.

Control

Guardrails → Prevents unsafe or irrelevant recommendations.

Auth / Rate Limit → Ensures only valid users access, avoids overload.

Agents

LangGraph Orchestrator → Routes user queries to the right tools and data sources.

LLM → Generates natural shopping advice from data + context.

Context

Session Memory → Remembers user preferences across the conversation.

Prompt Templates → Standard query patterns for queries like product search

Context Sanitizer → Cleans and normalizes user inputs.

RAG

Retriever → Finds relevant product, review, and guide data.

RAG Assembler → Merges retrieved info with reasoning for accurate answers.

Data Layer

Vector DB → Enables semantic product and query search.

Knowledge Base → Stores FAQs, guides, and product details.

Commerce DB → Holds live pricing, stock, and order data.

Eval/Obs

Observability → Tracks advisor performance and reliability.

Eval Benchmarks → Tests product query and recommendation accuracy.

Feedback Loop → Improves results based on customer feedback.

Implementation

- Both web and mobile shopping will be significantly transformed. New open weight models lowers the cost for APIs.

- With OpenAI releasing gpt-oss-20b model with 3.1b active parameters, this can be bundled with the mobile app and deployed on edge.

- Grounding is one of the key aspect of implementation of such an agent. Enabling the agent with Live Catalog data for search is paramount.

- Guardrails and business rules are essential for any agentic activity.

Agent Hierarchy

- Style Advisor Agent (orchestrator)

- Try On Assistant

- Product Catalog Agent

- Inventory Agent

- Ordering Agent

- Delivery Agent

What further changes are expected in near future

The question for enterprises isn’t whether multimodal AI will become the standard for customer interaction—it’s whether they’ll lead this transformation or be forced to catch up as competitors redefine what customers expect from digital shopping experiences. This will not only impact Retail Shopping, but also insurance buying, loan disbursal and Travel booking. All consumer shopping experience will be reinvented.

OpenAI has improved the streaming interactive models. More models will be released in short order and costs will fall. This will drive voice and language to become the primary mode for online shopping. All the digital interfaces will change to align to this need and trend.

See Agentic AI in Action: CatalogNow

At Sumvec, we have built CatalogNow — an AI-powered product catalog management platform that deploys 11 autonomous agents to automate product introduction, content enrichment, compliance auditing, and multi-channel publishing. It is a real-world example of agentic AI transforming enterprise commerce operations. Learn more about CatalogNow →